Today, Astera Institute is launching Radial, a division which reimagines how life sciences research happens at a systems level. Radial will be led by Becky Pferdehirt as CEO. We are committing up to $500M over the next decade to expand our build-test-learn approach to scientific infrastructure and practices.

How we fund, do, and build upon science in the U.S. has long needed an update. We’re at a historic inflection point with AI acting as a forcing function on biology — not because it will immediately solve hard problems, but because its demands and widespread adoption will, increasingly, expose how unfit for purpose our scientific infrastructure actually is. We also have more tools than ever before to find new solutions. But positive change isn’t inevitable, which is why Astera is expanding its efforts through Radial.

Radial is grounded in two key beliefs. First, scientific practices for impactful discovery—from how we design methods to how new knowledge is shared and translated into real-world use—need to be rebuilt from the ground up. Second, it’s very difficult to go about this without deliberate iteration through active research efforts. In other words, we need to experiment with how science is done through actual science and scientists.

Our starting framework

Radial is going to try a broader range of things in the beginning that will drive our own evolution. We will be looking for more radical experiments that can give more information about what’s possible, regardless of whether they succeed or fail in the classic sense. We will iterate on:

- What science gets done.

We are thinking about what gets funded as well as what scientists decide to work on in the first place. We have more ways than ever to traverse the white space with data and modeling, not just opinions and trends. And we’re happy to work with anyone and any sector that prioritizes impact, utility, and metascience experimentation.

For example, we’re working with industry partners to leverage existing tools and laboratory infrastructure to generate open, high-quality datasets. With OpenADMET, we are characterizing small molecule properties—ADME and toxicity—that can be explored and trained on for real-world utility. We think there could be more general potential here: leverage unique cutting edge platform capabilities from start-ups and point them at public good problems. It’s kind of the inverse of Focused Research Organizations (non-profit start-ups), and we think there could one day be a more generalizable model here that addresses distinct gaps in a complementary way.

- How science is organized.

Our institutions are built for an era that emphasizes discrete projects and individual achievement. Many scientific challenges today require truly multidisciplinary or multi-sector teams holistically redesigning all components of technical systems (data, methods, and projects). This requires a lot of time, experimentation, and willingness to step outside dominant incentive structures to first figure out what works.

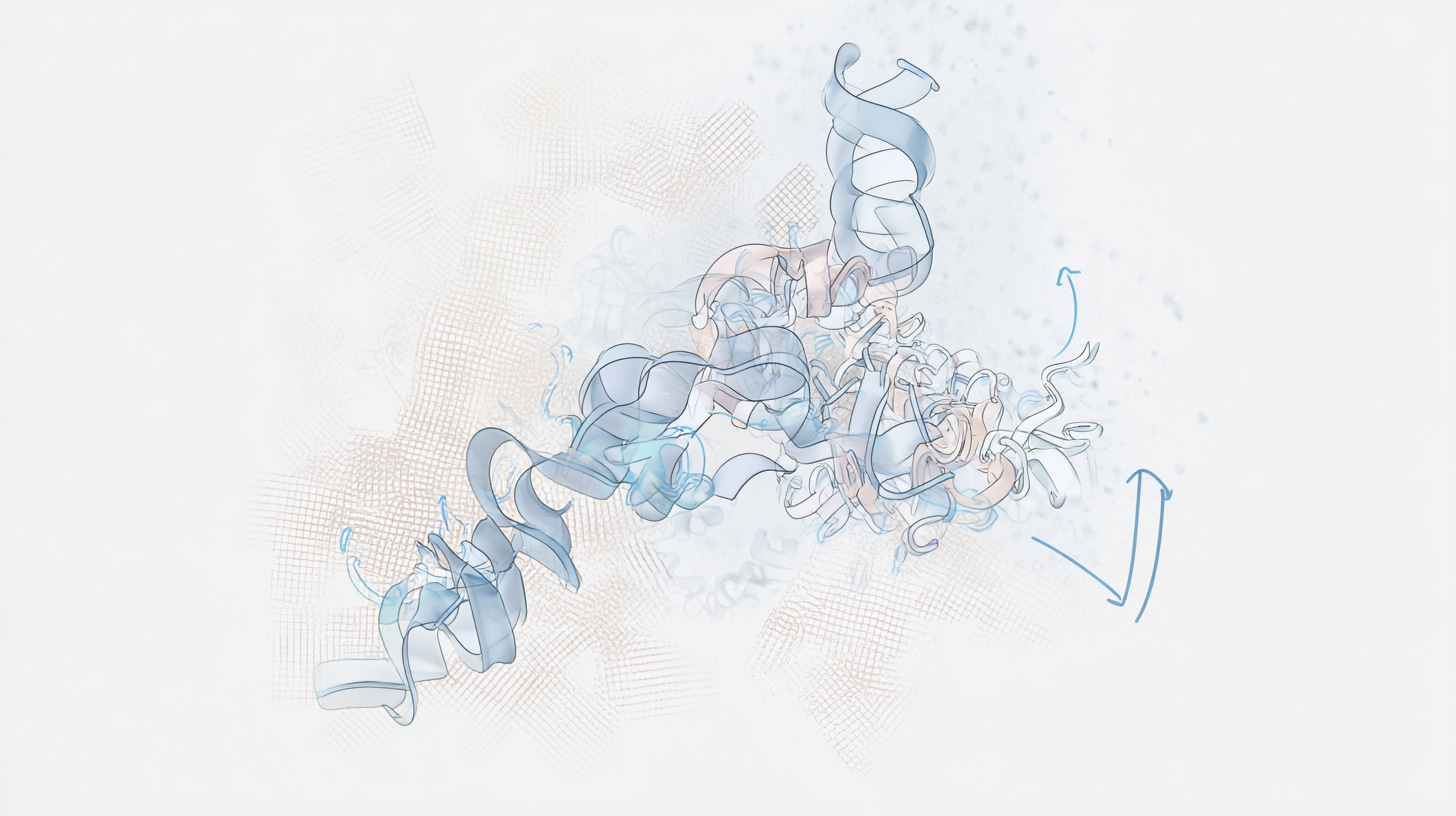

As an example, The Diffuse Project is our first major in-house program for understanding protein motion by co-developing the necessary experimental methods, computational models, data standards, and infrastructure. Our goal is to make dynamic structural biology data as foundational as the Protein Data Bank has been for static structures and to scale the data through broad methodological adoption.

- The outputs of science.

To accelerate scientific progress, we need to realign our infrastructure, metadata, and research artifacts around how AI-empowered scientists will actually work. We also need to build interoperable solutions so that advances compound across the ecosystem. We’re at a rare moment to shed the historical constraints on research sharing that have kept science from reaching its potential. The path forward is full of unknowns, which is where we feel most at home: testing what others don’t yet have the chance to try, and sharing what we learn along the way.

Among many other efforts, we are currently developing The Stacks, an open-access digital platform to experiment with how scientific, technical, and intellectual work is shared and discovered. It’s a publishing infrastructure prototype that we hope to innovate upon to help iterate towards what science actually needs from first principles for machine readability, rapid iteration, and genuine reuse.

Why now?

While we’ve been working in this area for a few years, we’ve needed a few things to fall into place before expanding. First, we needed to try a bunch of approaches to develop conviction around a starting framework worth expanding on. Second, we needed the right leadership team to take it to the next level.

I could not be more excited to share that Becky Pferdehirt has joined as Radial CEO. I’ve known Becky for over a decade and watched with admiration as she’s successfully worn many different hats as a scientist. Becky joins from Andreessen Horowitz, where she was an Investing Partner at a16z Bio + Health. Becky was previously an R&D Scientist at Genentech and held research and business development roles at Amgen. She has a PhD from UC Berkeley and a BS from MIT. If you’ve ever interacted with Becky, you also know that she is an exceptionally sharp, creative, and flexible thinker who acts with integrity – all critical for quickly imagining and exploring new directions for basic and translational science. Becky will be working closely with me and Prachee Avasthi, our Head of Open Science, as she takes the reins on Radial.

Joining her is Stephanie Wankowicz as Scientific Program Director of The Diffuse Project, our research initiative focused on protein dynamics. She will be leading its expansion. We are so grateful to Stephanie for fully taking the leap from her current post at Vanderbilt University, where she ran her own lab developing computational algorithms to model conformational ensembles from X-ray crystallography and cryo-EM data.

Becky and Stephanie will be working closely with several others, including Sekhar Ramakrishnan, who joins from The Swiss Data Science Center as Engineering Lead for The Stacks, our experimental publishing platform that we are developing and building through programs like Diffuse. Steven Moss has also joined us from the National Security Commission on Emerging Biotechnology as a new full-time Science Policy Associate to help think about how we scale change at a national level.

Join us

Radial is adaptable by design. We are building programs in-house, funding external teams with multi-year grants, investing in companies, and designing public-private partnerships across government, academia, and industry. We’re looking for people who are willing to take risks and treat informative failures like a badge of honor.

For all of our roles, we’re excited about candidates who will lead by example, shifting perceptions of what’s possible before it’s popular to do so.

For technical leadership: We’re searching for a Head of Bio AI to lead AI across Radial programs. [Apply here]

For structural biology and protein dynamics: Scientists, engineers, and operators for the Diffuse team. [Apply here]

For ambitious ideas that need space: Astera’s 2026 residency program has slots for projects that don’t fit existing funding models. [Apply here]

For new models of partnership: Companies, national labs, academic institutions—if you’re thinking about how your capabilities could be pointed at public-good science, let’s talk.

For working scientists facing bottlenecks: We’re launching an essay competition inviting active scientists to describe a concrete research challenge caused by structural bottlenecks, and experimental strategies to fix them. [Learn more.]

Get involved:

- Join the team: Open positions

- Residency program: Apply by April 19th

- Partnerships: Contact us

- Essay competition: Tell us about your bottlenecks

Today, alongside the launch of Radial, we are opening an essay competition that I’ve been ruminating on for some time. Namely, inviting active scientists from any sector to share concrete research challenges that can inform our future work at Astera. We’re interested in your hypotheses about what broad structural or systemic issues contribute to the bottlenecks you experience in your own science. It’s important to me that we hear more from active scientists on the ground.

Many of our scientific systems and institutions are no longer fit for purpose. How we fund work, share results, build teams, and connect science to other disciplines or sectors has long been in need of experimentation. This is no longer a controversial statement.

We are living through a historical inflection point that demands change. One force is technological, happening at unprecedented scale and speed. AI is making it harder to ignore systemic and infrastructural gaps, while also changing what solutions are possible. This is an incredible forcing function we should leverage to update our scientific practices.

At the same time, it’s become harder to talk constructively about change in light of political differences and more recent budgetary contractions. But it’s more important than ever to openly debate long-term reform now. And many disagreements are unlikely to be resolved through debate in the absence of real life testing.

We’re looking to you, scientists

The field of metascience, i.e. the science of science, is often driven today by non-scientists: policy experts, economists, sociologists, psychologists, historians, politicians. Their work can be very useful, but practicing scientists should be more deeply involved in shaping the systems they depend on.

Scientists know first-hand what is broken. When scientists themselves have led metascience experiments, the outcomes have often been distinctive and more durable: new institutes structured around questions; focused research organizations built to unlock specific field-level bottlenecks; community infrastructure launched because there was simply no other way to make it happen; critical resources that can’t wait for permission.

We want to help get more scientists in the driver’s seat of this conversation and source more hypotheses that can be tested for systemic improvements. We want all of it to happen in the open to stimulate more useful public debate about science. And we hope that will help the most compelling ideas get real world implementation through support from us or others.

Examples of what we’re looking for

Perhaps an easier way to explain what we’re looking for is to highlight a few historical examples that we would have loved to fund early iteration for. Here are a few:

- The Protein Data Bank

A few crystallographers were frustrated that hard-won structural data was disappearing into individual labs with no way to share it. They bootstrapped a community archive in 1971 with just seven structures and no formal institutional mandate. We would have loved to award an essay describing this gap and fund the early bootstrapping required to prototype the foundational data infrastructure for structural biology and drug discovery worldwide.

- arXiv

The scientist Paul Ginsparg noticed that his colleagues were emailing preprints to each other and built a centralized server in 1991 to do it better. We would have loved to award an essay describing this gap and fund the initial server required to test the utility of what became today’s default open publishing infrastructure for physics, math, and computer science. It has since become a general model for the broader open-access movement.

- Focused Research Organizations

Two scientists, Adam Marblestone and Sam Rodriques, were dead set on trying to generate more connectomics data as a critical public resource for the neuroscience community. This was a defined roadmap that required a start-up-like team, which lacked any dedicated funding mechanism. So they created one by inventing FROs, and it has become an enabling structure for many other projects with similar properties. We would have loved to fund early iterations of FRO projects (and we did through the first FRO: the longevity-focused Rejuvenome!).

- Arcadia Science

This one’s an experiment I’m directly involved in that’s still in a work-in-progress. Arcadia is a for-profit research company co-founded in 2020 by myself and another scientist, Prachee Avasthi. It was motivated by trying to reimagine how we could more effectively traverse a wider swath of biology for useful discovery than was possible in our academic labs. We asked how we could use data to develop organism-agnostic tools, compound broader lessons by sharing more of our work in real time, and open up new funding and sustainability strategies. It would be exciting to fund smaller scale pilots that could inform experiments that lead to new institutes, which can and should be less monolithic than what dominates today.

I hope more scientists will join us in this dialogue, which is why I’ve asked that all submissions are public. I know it can sometimes be uncomfortable to put your neck out in this way, but positive change is more likely if we normalize open debate. We should approach all disagreements according to the scientific principles we were trained on. Data, not drama: let’s do the experiment.

See more details and apply here by May 1st.

Dileep George is joining Astera as Head of AI, leading our AGI research division. Working alongside our Chief Scientist Doris Tsao, he and the team will explore novel, brain-inspired computational architectures to accelerate the development of safe, efficient and aligned AGI. Astera will continue to support this effort with over $1 billion in committed resources over the coming decade.

Dileep joins from Google DeepMind, where he worked on frontier AGI research on agents with memory, planning and structure learning. Throughout his career, Dileep has shown that drawing on the computational principles of biological intelligence opens up novel, high-impact pathways for AGI research. At Vicarious, he scaled algorithms for visual processing and reasoning, gaining worldwide attention for breaking text-based CAPTCHAs with human-like data efficiency. He also pioneered AI-powered robotics as a service for industrial applications. At Numenta, he co-developed Hierarchical Temporal Memory, the theoretical framework modeling how the neocortex learns and reasons.

Dileep joins Astera alongside Miguel Lázaro-Gredilla, previously a Research Scientist at Google DeepMind. As Research Lead, Miguel will spearhead the development of world models that utilize hierarchical latent variables for long-horizon planning and robust reasoning.

Neuro-inspired AGI research is underexplored relative to its potential

The overwhelming majority of AI research today pursues a dominant paradigm: scaling transformer architectures trained on massive datasets. This approach has produced remarkable results and will likely continue to do so, but concentration around any single research direction leaves promising alternatives underexplored.

The principles of biological intelligence likely offer novel approaches to AI engineering at scale that aren’t captured in existing research paradigms. This could help address two sets of challenges that remain on the path to AGI:

1. Current AI systems lack fundamental capabilities that biological intelligence demonstrates. They can’t handle long-range planning that requires maintaining coherent goals across complex action sequences, or learn continuously from experience the way humans do. Massive datasets are still required for tasks where humans need only a handful of examples, and they continue to fail to generalize robustly to situations that differ meaningfully from their training data.

2. The safety and alignment challenges posed by current architectures remain unsolved, even as we continue to scale them. We don’t yet know how to build systems whose goals stay aligned with human values as circumstances change in ways they weren’t trained for. We can’t reliably interpret why models make the decisions they do, which makes it difficult to predict or prevent failures.

Commercial investments currently concentrate on scaling transformers, which risks trapping the field in local minima: optimizing a single approach while leaving vast parts of the solution space unexplored. Biological intelligence offers computational principles that current architectures don’t capture, opening pathways to systems that are more efficient and more naturally aligned with how humans think and perceive.

Bridging neuroscience and AI engineering

Efforts to map biological intelligence — how the brain constructs perception, cognition, and intelligence itself — remain disconnected from the engineering of AI systems. Neuroscience and AI research proceed largely in parallel with limited integration.

Providing decade-scale commitment and computational resources, Astera is running two research programs in tight integration:

- Decoding the brain’s computational architecture: Led by Doris Tsao, Chief Scientist for Astera Neuro, our Neuro division is working to decode the fundamental mechanisms through which the brain constructs intelligence. These capabilities represent some of the hardest unsolved problems in AI, and the brain solves them with remarkable efficiency.

- Building AI systems that learn like humans do: Now led by Dileep, our AGI division tackles the research and engineering challenges of building systems that exhibit these capabilities: how intelligent agents adapt continuously to changing environments, correctly attribute rewards to actions in scenarios with sparse feedback, and build hierarchical memory systems that enable efficient retrieval and generalization.

Dileep and his team will work closely with Doris, whose work has revealed some of the most detailed accounts of how neural activity produces perception to date. Together, they hope to create an iterative research program where neuroscience discoveries inform engineering approaches, and engineering challenges surface new neuroscience questions. Going forward, we hope to see others more tightly link basic neuroscience and applied AI work.

This work will be conducted in line with Astera’s broader commitment to open science. We believe progress on AGI is better served by distributed work across the field than by locking insights away.

The team this requires

We’re now building a team whose capabilities span deep theoretical investigation of biological intelligence, large-scale ML systems engineering, and experimental validation of novel architectures.

We’re actively looking for researchers and engineers with strong machine learning backgrounds and deep curiosity about neuroscience: people who want to investigate what’s missing from current approaches and build something better.

If this vision excites you — whether you’re a researcher, engineer, or someone who wants to work on foundational questions about intelligence — we want to hear from you.

There’s always a need for more ideas and talent in this area. If you have an interesting, underexplored angle you’d like to chase down, we’ve also recently opened a call for applications to the Neuroscience and Artificial Intelligence tracks of Astera’s residency program. Our residency is meant to support talented innovators seeding early-stage projects, especially those that might sit outside of what’s conventionally pursued. We’re building a community here that could be a great hub for this type of exploration. We hope you will consider applying.

Important science and technology development often falls through the cracks of public funding and private markets, i.e. work that may be high impact but risky, requires long timelines, or involves unpopular ideas. These areas are ripe for philanthropy. And as AI ushers civilization toward an event horizon, we need more people working on the hardest problems with many shots on goal.

Over the last five years at Astera, we’ve tested different approaches to funding and building ambitious technical work. We’ve explored a lot of directions to figure out where we think we can have the most impact. We’re now sharpening our focus on two areas: intelligence—both biological and artificial—and AI-enabled life sciences. Progress in either could help positively shape humanity’s future in critical ways.

Both areas benefit from more philanthropic support, as they involve open questions, unexplored territory, and long timelines. The right experiments aren’t always obvious, and success might look nothing like expected. We’ve also chosen them because we personally know them well. Jed is an engineer focused on neuroscience and intelligence foundations; Seemay is a biologist experimenting with how research gets done. We engage directly with technical details, which allows us to embrace more uncertainty.

Structure and flexibility for technical work

Creating an organizational structure that sustains this work over decades requires more than knowing where to focus. We’ve found the most effective technical efforts function like startups: flexible, nimble, guided by leaders with real authority to make technical calls.

Like start-ups, they also need to be able deploy resources in a much more flexible way than is typical of most philanthropy. In addition to giving out grants, we find that work—especially of the more opinionated type—benefits from a wide range of tactical strategies, including hiring, contracts, competitions, and for-profit investments.

Moving forward, we’re intentionally separating Astera’s foundation from the technical divisions it supports. The foundation handles shared operational and administrative infrastructure to enable technical teams that run semi-independently like start-ups. Each has a leader with deep expertise and CEO-like authority, supported by flexible, long-term capital and operational scaffolding through the foundation. These include:

Neuro & AGI divisions that explore how biological systems compute, how that relates to artificial systems, and what approaches might lead toward general intelligence. We think there’s a wider space of possible architectures than currently explored, and neuroscience offers crucial insights. This builds on work by our current researchers and fellows.

A life sciences division, where we’re rethinking how science gets done in the age of AI. Today’s scientific approaches were largely designed for a different era. AI has given new urgency to the need to reimagine our practices, motivating us to expand efforts around funding, structuring, and publishing approaches. We believe that the best way to innovate on this front is by iterating alongside active, ambitious research efforts. For instance, by embedding initiatives like The Diffuse Project with open science experimentation.

The right leaders

Our new start-up-like approach only works if there are the right leaders in place. We look for people comfortable with uncertainty, technical enough to engage directly, with a builder mentality to create what’s needed. Critically, we’ve sought out people whose primary experience is outside philanthropy from industry, startups, or research environments.

We’ve already been fortunate to attract such people. In the coming weeks, we’re excited to share about several exciting new folks who will be joining Astera to help lead new divisions in intelligence and life sciences. In parallel, we’re also refocusing the residency program to better prioritize people and ideas where we have long-term commitment and in-house expertise.

We’re still learning

Astera has always been an experiment in doing philanthropy differently. This structure is another iteration. We have strong convictions but expect to keep adapting.

We’re eager to connect with people and organizations thinking about new approaches to funding or doing science. Or if you’re working on similar problems in intelligence or life sciences, we’d like to hear from you.

More at astera.org, or reach out at info@astera.org.

The Astera Institute is excited to launch a major new neuroscience research effort led by Dr. Doris Tsao, who will be joining as Chief Scientist for Astera Neuro. We seek to understand one of the deepest mysteries of science: how the brain produces conscious experience, cognition, and intelligent behavior. Astera will support this effort with $600M+ over the next decade.

Doris has spent her career developing one of the most detailed accounts of how neural activity gives rise to perception through work on the neural code and circuitry underlying face and object recognition. This work shows how a complex visual percept, object identity, is represented by a principled geometric code. Her recent work explores a new computational framework for how symbols first arise in the brain through specialized circuits for object tracking.

What are we doing?

Across every moment of our lives, the brain transforms raw sensory input into a coherent world filled with objects, relationships, meanings, and a sense of self. Yet we still do not understand the fundamental computational principles the brain uses to construct this internal world. Uncovering these principles would transform both neuroscience and technology–revealing the mechanism responsible for generating conscious experience, and at the same time, providing a new framework for AGI.

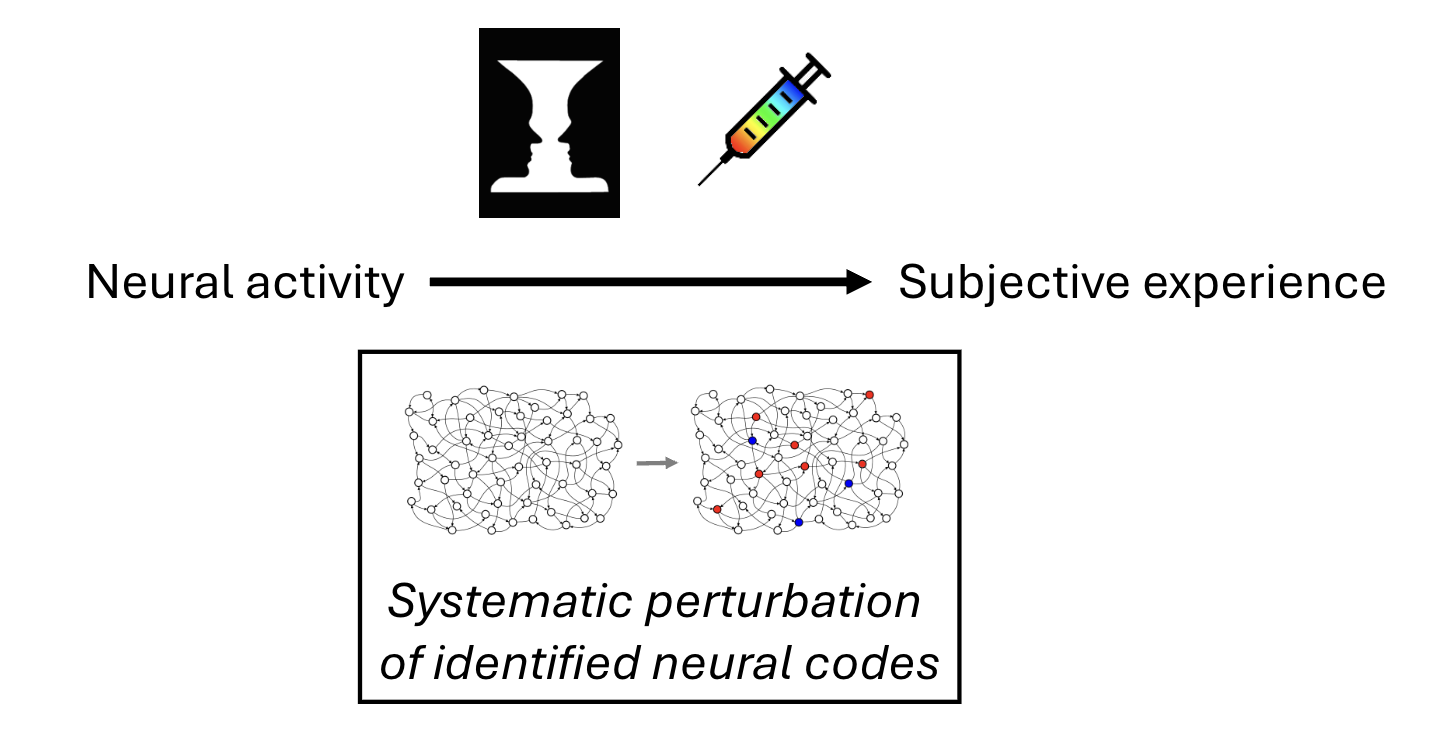

At the heart of our new effort is the conviction that true understanding of the brain’s internal model means being able to manipulate it in a controlled way. Towards this goal, we are betting that the brain’s representational architecture is compositional, built from elemental units and a neural syntax for combining them. By identifying these fundamental units and the rules that create and link them, we can uncover the brain’s infinitely generative internal code. This, in turn, would provide a principled way to construct or modify internal representations, much as knowing the words and grammar of a language allows the creation of an unlimited range of sentences and meanings. Such capability would mark a profound advance in understanding.

The compositional framework remains a hypothesis, but pursuing it opens a path for fundamentally new kinds of experiments. The first step will be to measure neural activity through large-scale recordings across a rich variety of stimuli and behaviors, allowing us to characterize the underlying neural code. We will then attempt to write in hypothesized neural codes and thereby construct or alter internal representations according to proposed compositional rules. In this way, we can move neuroscience beyond passive observation and towards active, engineering-style tests of a model. Whether or not our hypothesis proves fully correct, this approach will accelerate our understanding of how the brain’s internal model is built.

A field ready for a paradigm shift

The ability to precisely map and modify the brain’s internal model may sound like a lofty goal and indeed, for decades, progress in neuroscience was limited by technology. But that barrier has largely fallen, and we believe now is the right time for our moonshot. We now have the tools to interrogate the brain at unprecedented resolution and scale.

What is needed next is a coordinated engineering effort to fully harness these tools. Advances in large-scale neural recording, targeted stimulation, chronic high-density interfaces, and computational modeling have created a unique moment where a focused, non-clinical, scientifically driven program can push far beyond what academic labs or clinically oriented companies alone can achieve. We intend to fill this essential gap between traditional basic research and clinically driven neurotechnology.

Progress towards our goals opens major branches of independent inquiry:

- Inspiring new approaches to building and steering AI systems: Understanding the brain’s computational strategies—the architectural principles and representations—could reveal fundamentally different approaches to building AI systems that are orders of magnitude more efficient and naturally aligned with human cognition. Industry pursues only a narrow slice of what’s possible. We believe reverse-engineering the only generalized intelligence in existence could open up new pathways to general artificial intelligence.

- Deepening our fundamental understanding of biological intelligence and conscious experience: The brain is one of the universe’s wonders. What is the structure of neural activity required for a specific experience? What are the primitives of perception and thought? How does the brain represent itself? How do disruptions in the brain manifest as psychiatric and neurological conditions? We seek to develop a theory of conscious experience that successfully predicts the experiences that emerge when we write specific patterns to the brain.

- Opening pathways to revolutionary neural interventions: Today’s brain-machine interfaces work at the periphery, translating motor commands or delivering basic sensory inputs. But understanding deeper computational structures could enable interfaces that engage with the brain’s core representational system. This could have major therapeutic applications, for example, a visual prosthesis for the blind that restores vivid, naturalistic visual experience, not just pixelated sight.

Why Astera is pursuing this work

Since the founding of the Astera Institute in 2020, Obelisk, Astera’s AGI research program, has pursued the hypothesis that a better understanding of how intelligence arises in natural systems could reveal computational principles missing from current AI paradigms. The brain achieves flexible, general intelligence with roughly 20 watts of power. It constructs everything we experience—every object we see, every thought, every feeling—from patterns of electrical activity across ~100 billion neurons. It learns continuously from sparse data. It plans, imagines, and constructs a coherent model of the world. We don’t yet understand how.

Astera Neuro brings deep experimental neuroscience into direct dialogue with this work. We hope to create a tight iterative loop across teams where experimental findings shape AI architecture research, and computational questions drive new lines of neuroscientific inquiry.

We believe Doris has developed what may be the most detailed empirical account of how neural activity produces perception so far. The potential of her work requires long-term investment. We are excited to work with Doris to test her model and systematically explore how the brain constructs reality in direct collaboration with Obelisk engineers and researchers exploring alternative approaches to AGI. The iteration between these basic and applied research efforts will surface things neither could find separately.

Research will be shared exclusively outside traditional journals as a forcing function for developing faster, more open, and more useful outputs that represent the full scientific process. As we’ve seen with other efforts, we believe such an approach will enable greater alignment of scientific goals and values across the team. We will also be iterating on ways to make these outputs more compatible with AI-driven discovery.

Building the team

We are excited for the opportunity to build this moonshot. We have a chance to experiment with how science can be done by designing our team and approaches in a purposeful way. This work requires capabilities that don’t typically collaborate as part of a cohesive iterative circuit at an institutional scale: neuroscientists who can design experiments on complex natural behaviors, ML engineers who can build models from massive neural datasets, optical engineers working on holographic optogenetics and advanced imaging, systems builders who can create scalable experimental infrastructure, and metascience innovators dedicated to accelerating all aspects of this work.

Doris brings decades of foundational work on neural coding. For her next chapter with Astera, she is joined by an exceptional founding team (soon to be announced) whose contributions span large-scale reading and writing to neural circuits, clarifying the neural basis for cognition, and understanding brain function during naturalistic behavior.

We are now looking for a Chief Operating Officer who will work in direct partnership with Jed and Doris to transform their scientific vision into operational reality. They will be orchestrating collaboration across disciplines, building systems that support both rigor and speed, and helping create an organization capable of tackling problems at this scale.

What do standardized, low-cost space telescopes, ultra-high-performance bio-inspired materials, and fusion energy that costs under 1¢/kWh all have in common? For one, each of these domains holds incredible potential to further human flourishing. And secondly, each represents a new idea that will be pursued over the next year at our Emeryville, CA campus.

We’re delighted to introduce three new residents to our Residency Program — a program in which residents receive a salary, a budget of up to $2M for team and expenses, compute access, lab space, and an exceptional community of talented like-minded peers, mentors, and investors.

Read on to learn more about our three new ambitious entrepreneurs, along with a brief overview of their work. In the coming months, we’ll be sharing more detailed profiles of the residents and their projects.

If you’re interested in applying to be a future resident, you can reach us at residency@astera.org, or subscribe here to receive our next call for applications, coming in early 2026.

Aaron Tohuvavohu – Cosmic Frontier Labs

Dr. Aaron Tohuvavohu is a physicist, astronomer, and explorer designing the next generation of space telescopes. He has designed missions and experiments across the electromagnetic and multi-messenger spectrum, with expertise spanning black holes, relativistic explosions, UV and X-ray instrumentation, and space systems engineering. Most recently, he led an 11-month sprint from clean sheet to launch of the highest-performance UV detector in orbit, and drove major upgrades to NASA’s Swift Observatory, significantly expanding its scientific reach, impact, and efficiency.

Project description

Cosmic Frontier Labs is building a new class of scientific tools to accelerate discovery and exploration of the Universe. We are expanding humanity’s cosmic horizons by scaling up the number and capability of orbital observatories, bringing Hubble-quality to fleets of telescopes rather than single flagships. By redesigning precision instruments for manufacturability and iteration, the team is moving space astronomy from an era of scarcity to one of abundance, continuous innovation, and exponential discovery.

These telescopes will form a platform for science that evolves as quickly as the questions we ask. We will build the platform iteratively, to continuously integrate new detectors, optics, and algorithms on successive units. In this near future, exploring the cosmos won’t depend on waiting decades for the next great observatory, but on a living, growing constellation of instruments; each a window into the expanding frontier of human understanding.

Open roles: Contact info@cosmicfrontier.org if you’re interested in the mission and want to explore ways to contribute!

Damien Scott – 1cFE

Damien Scott is a technologist and founder. Homeschooled in Botswana and shaped by science fiction, his north star is to build energy systems that move humanity up the Kardashev scale toward post-scarcity. His first entrepreneurial venture was founding Marain, an electric and autonomous-vehicle simulation and optimization company that was acquired by General Motors in 2022. His career has spanned energy and mobility systems across startups and large companies, including the extreme engineering environment of Formula 1 at Williams F1. Beyond racing, he worked on a wide variety of initiatives, from adapting uranium-enrichment centrifuge concepts, to electromechanical flywheel energy storage, to hybrid hypercars and automated mining systems. He has a BSc in physics from the University of Sydney and an MS and MBA from Stanford University.

Project description

Everything humanity values depends on abundant, inexpensive energy. Most usable energy across the universe is fusion…with extra steps. The last decade has brought major public and private progress towards cutting out those steps, to directly generate electricity from fusion, and bring us closer to abundant, low-cost energy. The 1cFE initiative builds on this progress to set our ambitions higher: could the cost of fusion reach below-1¢/kWh LCOE within the next ten years? We map cost-first corridors to sub-cent power, integrating physics, engineering, and manufacturing. We will also publish open analyses, and test how emerging AI capabilities can radically improve and compress cycles across science, first-of-a-kind engineering, and deployment. Our outputs are intended to steer R&D, capital allocation, and policy toward the fastest corridors to sub-cent fusion energy, thereby pushing humanity up the Kardashev scale and upgrading our civilization.

Open roles: Theoretical Physicist and Systems Engineer

Tim McGee – Impossible Fibers

Tim McGee is a biologist and materials innovator developing new ways for proteins and composites to self-assemble into high-performance materials. Trained in Biomolecular Science and Engineering at UCSB, his mission is to translate biology into design and manufacturing. As an early pioneer of bio-inspired design at Biomimicry 3.8, IDEO, and later his own firm, LikoLab, he has worked with global teams on challenges ranging from advanced coatings for food, to novel textile manufacturing, to the biophilic design of urban environments. Most recently, McGee founded Impossible Fibers at Speculative Technologies, leading a DARPA-funded collaboration to predict fiber properties directly from amino acid sequences. His work integrates biology, design, and engineering to create new manufacturing capabilities where materials are assembled from the nanoscale to the macroscale.

Project description

The Impossible Fibers Lab is building a new manufacturing environment that enables proteins to self-assemble into exceptional materials; fibers and composites with electrical, optical, and mechanical properties beyond what’s achievable today. Existing fiber production systems were designed a century ago, and were made for cellulose and plastics, not for the complexity of proteins. McGee’s team combines microfluidics engineering, encapsulation chemistry, automated liquid handling and robotics, and novel spinning techniques to explore how protein composites form, align, and transform during fiber fabrication. The resulting structured dataset will map the relationships between molecular sequence, process conditions, and material outcomes, creating the foundation for predictive, bio-inspired materials design.

In the long term, Impossible Fibers seeks to make matter programmable, from quantum interactions to custom product-scale performance, laying the foundations for a new era of materials manufacturing.

—

Extending a warm welcome to our new residents, and stay tuned for a deeper dive into their work!

We’re moving into an age in which agents are our partners across all aspects of science. Machines will systematically process data, spot patterns, propose experiments, and even generate hypotheses across more and more of our work. I’m excited for how this will accelerate science.

A technological shift of this magnitude requires a similarly big shift in what we study and how we go about it. For one, there should be significantly more emphasis on what data is important to generate and how we build, share, and scale datasets. For another, there should be much more emphasis on funding system architecture that best enables systematic AI-driven discovery, as opposed to primarily funding individual labs to expand datasets.

Today, I’m happy to announce a new $5M funding initiative in structural biology that will experiment with how we make this shift.

The role of scientists in science

Humans remain essential in science. This is a critical moment to stake out where we can most uniquely contribute. What can’t AI solve yet? And for the things it can, how can we leverage its capabilities to do even more creative, generative exploration beyond that radius?

Machines excel at synthesizing large amounts of information through systematic data processing, pattern recognition, and probability calculations. We should replace ourselves in those types of analyses where possible. It’s an uncomfortable, but necessary transition.

There’s still plenty of upstream and downstream work that only we can do:

- Downstream: ML predictions are hypotheses, not conclusions. We must test them through research and reuse, closing the loop to validate and improve predictions.

- Upstream: Machines work with the data we give them; the nature and design of this data sets the ceiling for what’s possible to predict. We decide what questions to ask and how to architect the systems to answer them.

This piece focuses on the upstream. Too many research proposals focus on generating more data without asking how those expensive exercises will get us somewhere worthwhile. Many brute-force scaling efforts will hit diminishing returns unless we first rethink the kinds of data and data systems we need. This is where human ingenuity will matter most.

The next PDB is the PDB

We need to work smarter, not just harder. As a structural biologist, I’ll give an example close to my heart. And one I’m funding next.

A common trope in funding circles is “What’s the next Protein Data Bank (PDB)?” The question is asked as if structural biology were “done” post-AlphaFold. However, one next big challenge is the PDB; it’s in moving from static protein structures to predicting how they move.

Since form informs function, we use protein structures as clues for what proteins might do in cells. But proteins inherently work through motion: traveling, binding, catalyzing, breathing, and changing conformations. Unlocking protein dynamics would be a giant leap toward uncovering more protein function. This helps us better understand, engineer, and manipulate biology. Biotech companies are already attempting to leverage local data or simulations about protein dynamics for drug modeling.

So one of the “next PDBs” is the PDB itself. It’s not just a remake; it’s PDB 2.0, wherein we can transition from static snapshots to movies. PDB 2.0 can bring protein structures to life.

To get there, we need to think carefully about what conditions are required for such a breakthrough. PDB 1.0’s success depended on being large and standardized, with open data norms. But it also hinged on two other major elements:

- Getting the right slice of information The PDB didn’t contain all protein structures. But it had enough variation in sequences and folds to reveal key design principles. Data was structured for computation (e.g., multiple sequence alignments) to enable breakthroughs. Today, we know more about ML needs, so we can be intentional about focusing our efforts on the data that is most information-rich.

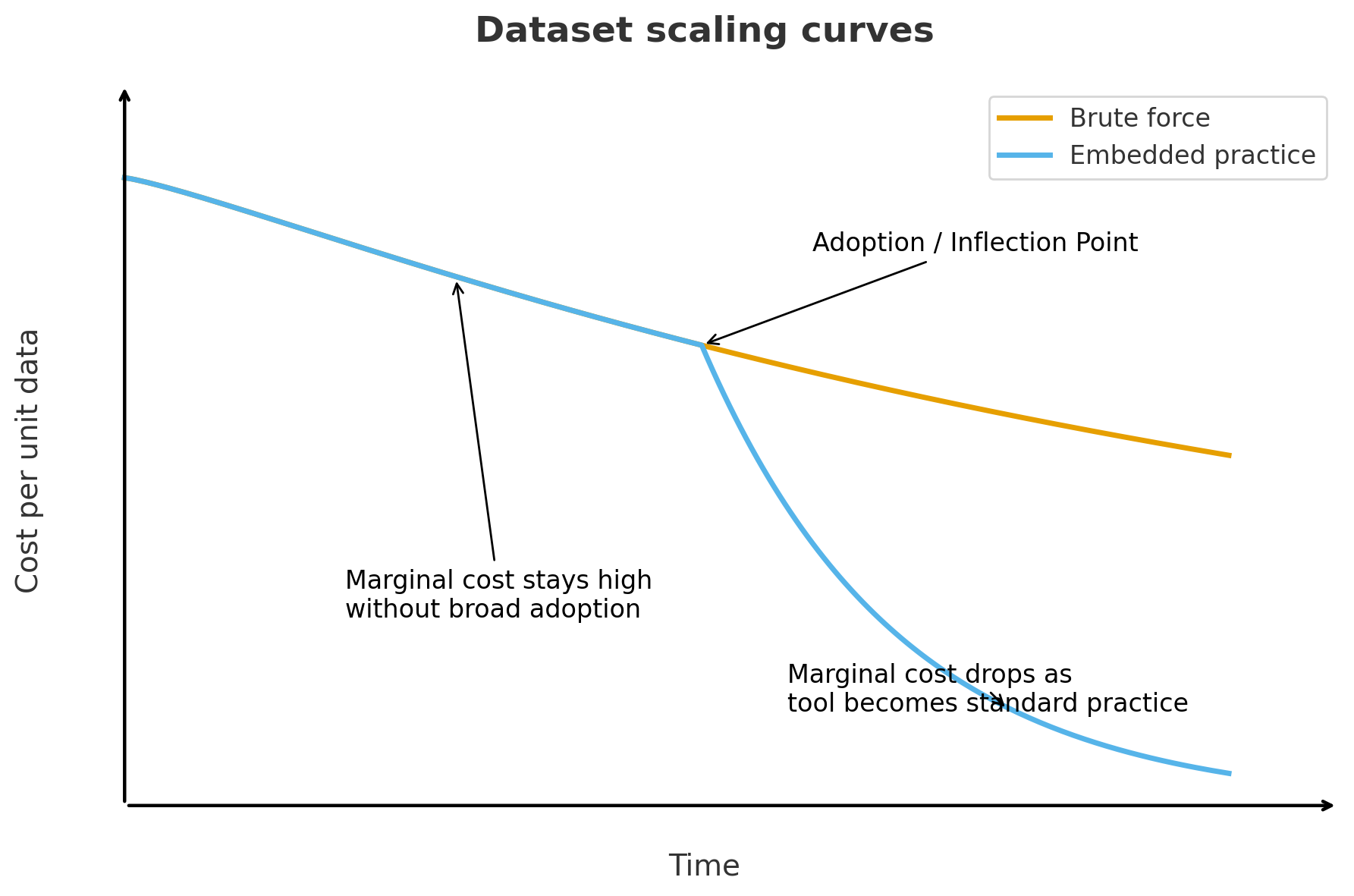

- Scaling through embedded practice The PDB scaled because crystallography naturally produced standardized data as a byproduct of routine work across methods, hardware, file formats, and infrastructure. This reduced marginal costs as the practice spread. It’s very different from a brute-force approach to data generation, where costs keep rising and the volume of data increases.

Finding the right information and embedding it in practice go hand in hand: the right “plumbing” motivates and enables the scientific community to capture valuable data.

We should challenge ourselves to be more cost-effective with PDB 2.0, now that we know more about what we are working towards. We also have machines to help us run quick calculations on what data is most information-dense and therefore most valuable. Instead of taking fifty years and costing tens of billions of dollars, could PDB 2.0 be faster and more efficient?

Principles in practice: introducing The Diffuse Project

With a $5M seed for The Diffuse Project from Astera, work will be coordinated across several universities (UCSF, Vanderbilt, Cornell), scientists associated with national labs and light sources (Los Alamos, Lawrence Berkeley, CHESS), and a team within Astera Institute. Our goal: redesign multiple components of the X-ray crystallography process to capture oft-discarded data on protein conformations.

Traditional crystallography focuses on Bragg scattering, the bright spots that represent the averaged conformation in a protein crystal. Other protein conformations in a crystal produce a more distributed signal — diffuse scattering — which is usually ignored due to its inherent complexity. But today, we have more tools to deal with the messy heterogeneity of diffuse scattering than ever before. Our technical aim is to transform diffuse scattering into a usable signal for hierarchical models of conformational ensembles. I’m excited by how current technologies allow us to better embrace biological complexity in this way.

In close collaboration with experts across the crystallography workflow, we’ve devised a strategy to test whether diffuse scattering could become mainstream practice in structural biology. Our parallel mission is to make the workflow useful, easy, and affordable for anyone studying protein function.

Our team is committed to radical open science by releasing data quickly and sharing progress entirely outside traditional journals — and there have already been many benefits. It has made our brainstorming and collaboration processes much more ambitious and fun. It’s freed us up to focus on outputs that are most useful and representative of our process.

An exciting aspect of the team’s open approach is the chance to experiment in real-time with what scientific publishing could look like in the future. Our open science team is collaborating with our researchers to figure out how they quickly share outputs that are most useful and most representative of our process. As AI becomes more central, our publishing will become more data-centric as well. This is an invaluable opportunity to iterate on this alongside active science to solve real problems on the ground.

We’ve only been in operation for about a month, and we already have data and results to share! You can follow all of this work on The Diffuse Project website.

Utility is the north star

Without journal constraints, we can think more clearly about what will make our science as impactful, accelerated, and rigorous as possible. We keep coming back to the mantra: utility is the north star.

What might high-utility success look like? Our answers span different time horizons, but we strive to be concrete. Here are some examples (which will likely evolve as we go):

- Adoption of diffuse scattering by experts outside the initiative

- Adoption of diffuse scattering by non-experts

- Integration of other modalities with a growing X-ray diffuse scattering dataset

- ML models for protein motion trained on diffuse scattering data

- Biotech start-ups founded from these models

- New therapeutics on the market developed from these models

The diffuse scattering work could take up 7–10 years to reach a scaling inflection point. That’s longer than most projects, but shorter than the time required for the original PDB to scale to a size that enabled AlphaFold. I’m optimistic that we could speed this up if The Diffuse Project truly succeeds in enabling the broader community. It’s a challenge that our team is excited to take on.

A note for funders

Funders, this is the time to flip the script on what science we support. We must move from primarily funding individuals to funding coordinated efforts that “lift all boats” in agent-led discovery systems.

Effective data systems will be a bottleneck for transformational ML. Systems have interdependent parts; if you don’t redesign multiple components at once, you risk getting stuck at a local maximum, due to dependencies between components. This is a well known principle in evolution and engineering. But we’re not great at applying this scientific framework to our own science. We need funding mechanisms that enable sustained, coordinated design sprints across interconnected entities with different areas of expertise.

This kind of systems-level innovation is risky for individual researchers. Not only does it require grappling with the unknown, it also requires grappling with many unknown pieces at once. Any proposal for a project like this would be disingenuous if it provided a super specific roadmap with a high degree of certainty.

Instead, funders need to redefine what success means so that researchers can embrace risk. Namely, teams should prioritize learning and sharing, even about their failures, which can often be informative. The goal can’t be to “win.” It needs to be to learn. The learning process is dynamic (just like proteins!) and we should fund and publish research with that in mind.

Iterating on data systems is also operationally risky. It requires proper infrastructure, engineering, compute access, and ML collaborations. We’re experimenting with unique ways to get this done without creating more bureaucracy or coordination overhead. Astera is providing compute access through Voltage Park and hiring a team for much of the computational and modeling work. This is another avenue by which funders can create an outsized impact through their support.

And at a minimum, we must insist on open science and open data practices. Otherwise, we risk limiting reuse, rigor, and impact. It’s the greatest insurance funders have for a return on their public good investment. Based on my own experiences, I’m very optimistic that we can hold the line on this without sacrificing talent. With the open science requirement, I’ve been able to quickly filter through to some of the smartest scientists, with the most abundant mindsets, with whom to go after big problems. The Diffuse Project’s team is awesome. I could not be more thrilled about the stellar set of scientists leading the research.

A first step towards the future

The Diffuse Project is not an outlier. It’s one example of the broader shift we need to make towards a data-centric future of discovery.

This shift will allow us scientists to work smarter. As machine learning systems become more prominent in hypothesis generation, simulation, and interpretation, the role of scientists will not disappear — it will evolve. Our ability to be creative about what data we generate, how we go about it, and what it enables, will become an increasingly central part of how we contribute to discovery.

This is not a loss. It’s an opportunity. It’s a chance — for both scientists and funders — to reclaim the architectural layer of science as a thoughtful, creative, and essential realm of experimentation and discovery. And it’s a place where human judgment still matters — perhaps more than ever.

If you’re working on something that could benefit from this kind of innovation, I’d love to see it published openly so I can follow and learn through open discourse. In the spirit of open science, I generally prefer not to respond to private emails or DMs. I would love to see open science publishing in effect at the ideation stage too.

Interpretability is one of the biggest open questions in artificial intelligence. In other words, what’s going on inside these models?

Adam Shai and Paul Riechers are the co-founders of Simplex, a research organization and Astera grantee, focused on unpacking this question. They’re working to understand how AI systems represent the world — a critical component of AI safety with major societal implications.

We sat down with them for a conversation on internal mental structures in AI systems, belief-state geometries, what we can learn about intelligence at large, and why this all urgently matters.

How did you both decide to leave academic tracks to start Simplex?

Adam: My background was in experimental neuroscience. I spent over a decade in academia — during my postdoc, I was training rats to perform tasks, recording their neural activity, and trying to reverse-engineer how their brain neural activity leads to intelligent behavior. I thought I had some handle on how intelligence works.

During that time, ChatGPT came out. I was stunned at its abilities. I started digging into the transformer architecture, the underlying technology that powers ChatGPT, and I was even more shocked. The transformer architecture didn’t intuitively tell a story about its behavior.

I realized two things at that time. The first was unsettling. I’d thought of myself as a neuroscientist with a pretty good intuition for the mechanistic underpinnings of intelligence. But that intuition seemed to be completely incorrect here.

The second was even scarier. What are the societal implications for having this kind of intelligence at scale? The social need to understand these systems, and to understand intelligence more broadly, is more important than it’s ever been before. At the same time, there’s also this new opportunity to understand intelligence, not just for artificial systems, but maybe even for humans — to really learn more about our own cognition.

Paul: I came from a background in theoretical physics, thinking about the fundamental role of information in quantum mechanics and thermodynamics, with a recurring theme of predicting the future from the past — how to do that as well as possible. That’s traditionally a core part of physics — your equations of motion help you predict how the stars move, for example. But it also gets into chaos theory, where you have very few details available to predict a chaotic, complex world. And you quickly get to the ultimate limits of predictability and inference.

At the time, this had nothing to do with neural nets and I hadn’t paid much attention to them — they work on predictions of tokens, or chunks of words — but they seemed unprincipled compared to the beautiful theory work I was doing in physics. But then they started working — and not just working, but working really well. To the point where I started feeling uneasy about the future societal implications.

And given my background in prediction, I then started wondering what it might look like to apply the components of a principled mathematical framework to what these neural networks are doing. What are their internal representations? What emergent behaviors can we anticipate? I linked up with Adam and we tried the smallest possible thing we could. And it worked better than we could have hoped for.

That’s what grew into Simplex — we realized there wasn’t really a space for this yet, a program to solve interpretability all the way, and there’s a lot to build on. Our mission at Simplex is to develop a principled science of intelligence, to help make advanced AI systems compatible with humanity. It’s been a really fun collaboration and it’s leading us to a better understanding of how AI models are working internally, which then gives insight into what the heck we’re building as AI systems scale. We’re optimistic these insights can help create more options and guide the development of AGI towards better outcomes for humanity.

Thanks for reading Human Readable! Subscribe to receive news and updates from Astera Institute.

You’ve described AI models not as engineered systems, but as ‘grown’ ones…what do you mean by that?

Adam: Yeah, I think this is an under-appreciated aspect of this new technology. Most people assume they’re engineered programs. But they’re not like other software programs — they’re more like grown systems. Engineers set up the context and rules for growing, and then press play on the growing process. What they grow into and the path of their development isn’t engineered or controlled.

And so we’ve ended up with these systems that are incredibly powerful. They are writing, deploying, and debugging code, solving complex mathematical problems at near-human expert levels, and really peering over the edge of what humans can do. This isn’t a future scenario, that’s where we are right now. But we have very little idea of how these systems work internally. And that’s a problem — a lot of safety issues come from this unknown relationship between the behaviors of these systems and their internal processing.

Paul: For example, AI systems are increasingly showing signs of deception, especially when under safety evaluation. Some actively evade safeguards, while others generate plausible explanations that don’t match their true reasoning. These mismatches between external behavior and internal thought process of the AI highlight the need to understand the geometry and evolution of internal activations — without it, we’re largely blind to the system’s intentions.

So how do you go about studying that internal structure?

Paul: We’ve come up with a framework that started from a part of physics called computational mechanics — basically exploring the fundamental limits of learning and prediction by asking what features of the past are actually useful in predicting the future. And then we leveraged this structural part, which looks at the geometric relationship between these different features of the past.

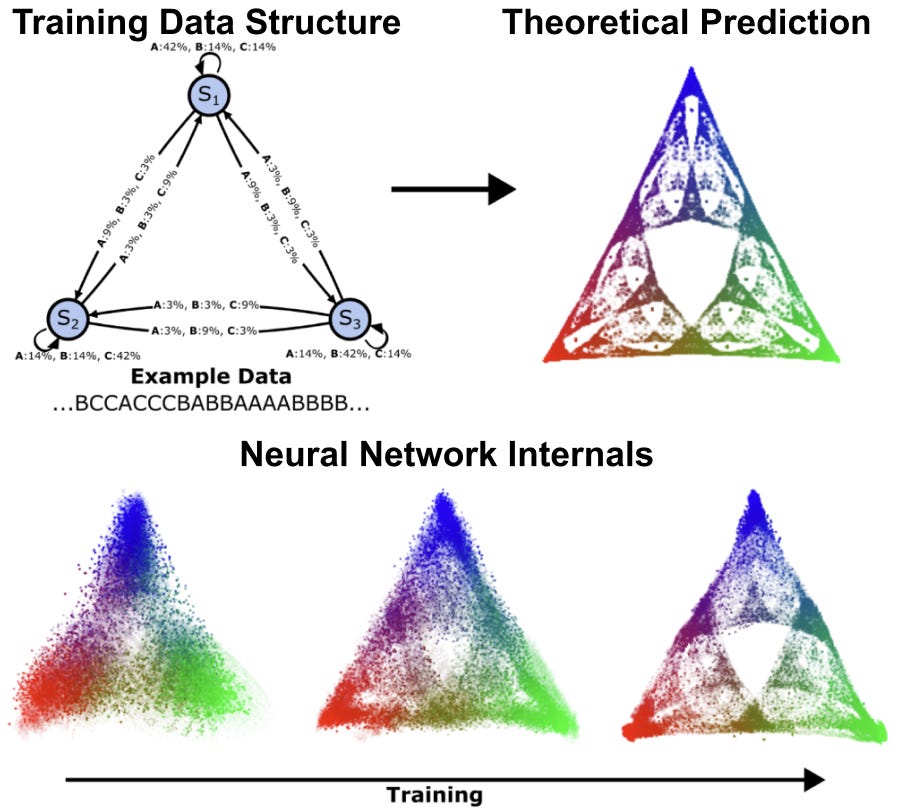

Adam: We discovered that AI models organize information in consistent patterns, like a mental map consisting of specific types of shapes and structures. We call these patterns belief-state geometries, because fundamentally they are the AI model’s internal representation of what’s going on in the world. These patterns often have repeated structure at different scales, leading to intricate fractals. For instance, when we studied a transformer learning simple patterns, we found it creates a fractal structure that looks like a Sierpinski triangle — where each point represents a different belief about the probabilities of all possible futures. As the AI reads more text, it moves through this geometric space, updating its beliefs in mathematically precise ways that our theory predicted. Now that we have this framing, we can start to anticipate those structures. We can start to predict where in the network to look for them, and test whether our theories hold and we can refine them. It’s a huge shift from just poking around and hoping something interpretable falls out.

How does this differ from what others in the field are doing?

Adam: There’s a field of research called interpretability, which is trying to understand how the internals of AI systems carry out their behavior. It is very similar to the type of neuroscience I used to do, but applied to AI systems instead of to biological brains. There’s been an enormous amount of progress over the last few years, and a lot of interest because of the growth of LLMs. In many ways interpretability has even been able to surpass the progress in neuroscience.

However, the approach is often highly empirical. Despite all the progress, the field is often left wondering to what extent the findings apply to other situations that weren’t tested, or if their methods are really robust, or how to make sense of their findings. In a lot of ways, we still don’t really know what the thing we are looking for is, exactly. What does it look like to understand a system of interacting parts (whether they be biological or artificial neurons) whose collective activity brings about interesting cognitive behavior? What’s the type signature of that understanding?

Paul: What’s missing is a theoretical framework to guide empirical approaches. Our unique advantage is that, by taking a step back and really thinking about the nature of computation in these trained systems, we can anticipate the relationship between the internal structure and behaviors of AI systems. One of the most important points of this framework is that it is both rigorous and also amenable to actual experiments. We aren’t doing theory for theory’s sake. The point is to understand the reality of these systems. So it’s this feedback loop between theory and experiment that allows us to make foundational progress that we can trust and build on in a way that’s different from most other players in the field.

How might this type of geometric relationship between features of the past differ across sensory modalities — language, images, sounds, etc.? Is there a difference between how it works in humans and AI systems?

Paul: This is an area we’re really interested in exploring more. There’s some evidence that suggests that neural networks trained on different modalities converge on similar geometric representations of the world. It seems to be that no matter which modalities an intelligent system uses to interact with the world, it’s trying to reconstruct the whole thing.

It raises some fascinating questions — to what extent are different modalities and even different intelligences converging on a shared sense of understanding? Is there a unique answer to what it’s like to be intelligent? And if so, maybe that’s useful for increasing the bandwidth of communication among different intelligent beings? And if not — if each intelligent thing understands the world in a valid but incompatible way — that’s maybe not great for hopes of us being able to come to a shared understanding and aligned goals? So that’s one thing we’re very interested in as we learn how models represent the world.

Adam: I think there’s also a really interesting opportunity to understand ourselves better. It opens up this entire new field of access to understand intelligence. If you’re a neuroscientist, you no longer have to decide between studying a human brain and having very low access, or studying a rat and having more access at the expense of cognitive behavior. With neural networks, you can look at everything.

We can even potentially engineer neural networks to whatever level of complexity, whatever kind of data, and whatever kind of behavior we want to study, even at a level that exceeds human performance. And it opens up this new, really fast feedback loop between theory and experiment — it’s an unprecedented opportunity to understand intelligence in a very general sense.

Given your work is really about understanding intelligence more broadly, beyond just AI systems, where do you hope it will lead?

Adam: Previously, there’s been no framework that gives us any kind of foothold to talk about this relationship between internal structure and outward behavior. We’re trying to build this kind of principled framework for how to think about these questions. It could be applied to LLMs in order to understand them and make them safer. But the general framework also has the promise of being applicable to other systems where we’re trying to understand the relationship between the internal structure and outward behavior. And those other systems could be biological brains.

Paul: Part of the value we’re providing is also a shared understanding, or ground truth for how these systems work. Today, people have different opinions about what these systems are — some maintain that the current AI paradigm will fall short of AGI or superhuman intelligence, while a growing contingent finds it obvious that you can bootstrap a minimal amount of intelligence to become superhuman across the board. More concerning is that informed technologists disagree about whether ASI (artificial superintelligence) will most likely lead to human extinction or flourishing. Even among experts, people really talk past each other. AI safety may or may not be solvable. Part of our work is to establish a scientific foundation for coming to a consensus on that, and identifying paths forward.

I’m hopeful that we can elevate the conversation by creating a shared understanding of what the science says, so it’s no longer doomers and optimists, but rather people working together to figure out the implications of what we’re building, and how we steer towards the kind of future we want. It’s a lofty goal but we think it’s possible. As we continue to build our understanding of structures in our own networks, we’ll hopefully be able to leverage that for a societal conversation for what it is we want to be building towards.

Learn more about Simplex’s insights and follow their technical progress here.

What does it take to make — and keep — a planet habitable?

For over 50 years, humans have explored space, seeking new homes for life. Life on Mars is the stuff of great science fiction. And the work of actually creating sustainable habitats and ecosystems beyond Earth has, by extension, been a far-flung future. Now, that may be changing.

Edwin Kite is a planetary scientist and current resident at Astera who, together with his team and collaborators, is working on defining a contemporary Mars terraforming research agenda. We spoke with him about what it would take to warm Mars up enough for life to thrive, how open source tools and datasets help research communities build towards the future, and what drives a scientist to investigate how people might create ecosystems beyond Earth.

You’ve been working on Mars science for years. Why this planet?

The biggest unanswered question in Earth science is how and why our planet stayed habitable for life.

For example, for nine-tenths of Earth history, our planet has been uninhabitable for humans: we don’t know why oxygen levels rose.

These are especially interesting questions when you consider that Mars was once habitable, but lost its ability to sustain life. Mars holds a record of that environmental catastrophe, and may hold traces of life that established itself there before that natural disaster. We are in a golden age of Mars science today, with two plutonium-powered rovers on the surface and an international fleet of spacecraft in orbit. We can deeply explore the planet for signs of this record and seek answers to our questions about what happened. It’s a great time to be doing Mars science.

The biggest unanswered question in Earth science is how and why our planet stayed habitable for life.

Understanding what made and what ended Mars’s early habitability can also help us better understand Earth’s history of life and explore the possibility of re-making Mars habitable. Mars has plenty of water and carbon, and its surface receives about as much sunlight as does all of Earth’s land. Sunlight powers almost all of our biosphere, so it’s tantalizing to think of what kind of biosphere sunlight might support in the future on Mars. It’s by coevolution with photosynthetic life that people built cities.

Our ancestors were, in Darwin’s words, hairy forest-dwellers. They moved outwards and built tools like spacesuits and sealskin coats to allow human life in the face of once-unimaginable hazards, like hard vacuum and winter snow. But this approach can only take us so far. Throughout time, space has been forbidding to life, with radiation, micrometeoroids, and cold. If we are going to have an adventure that’s endless, we’ll need to adapt the environment to ourselves. I don’t know how we’ll do this, but the bigger rocky and icy worlds of our solar system seem like a logical place to start. Many are rich in life-essential-volatile elements, all can offer radiation protection, and all have enough gravity to hold onto a stable atmosphere.

What’s the scope of your current project at Astera, focused on terraforming?

It’s been understood for over 50 years that there would be two steps to making Mars more Earth-like: first warm the planet up to allow photosynthesis, which is relatively quick and easy, then build up the oxygen level using photosynthesis. We’re looking at the first step, surface warming.

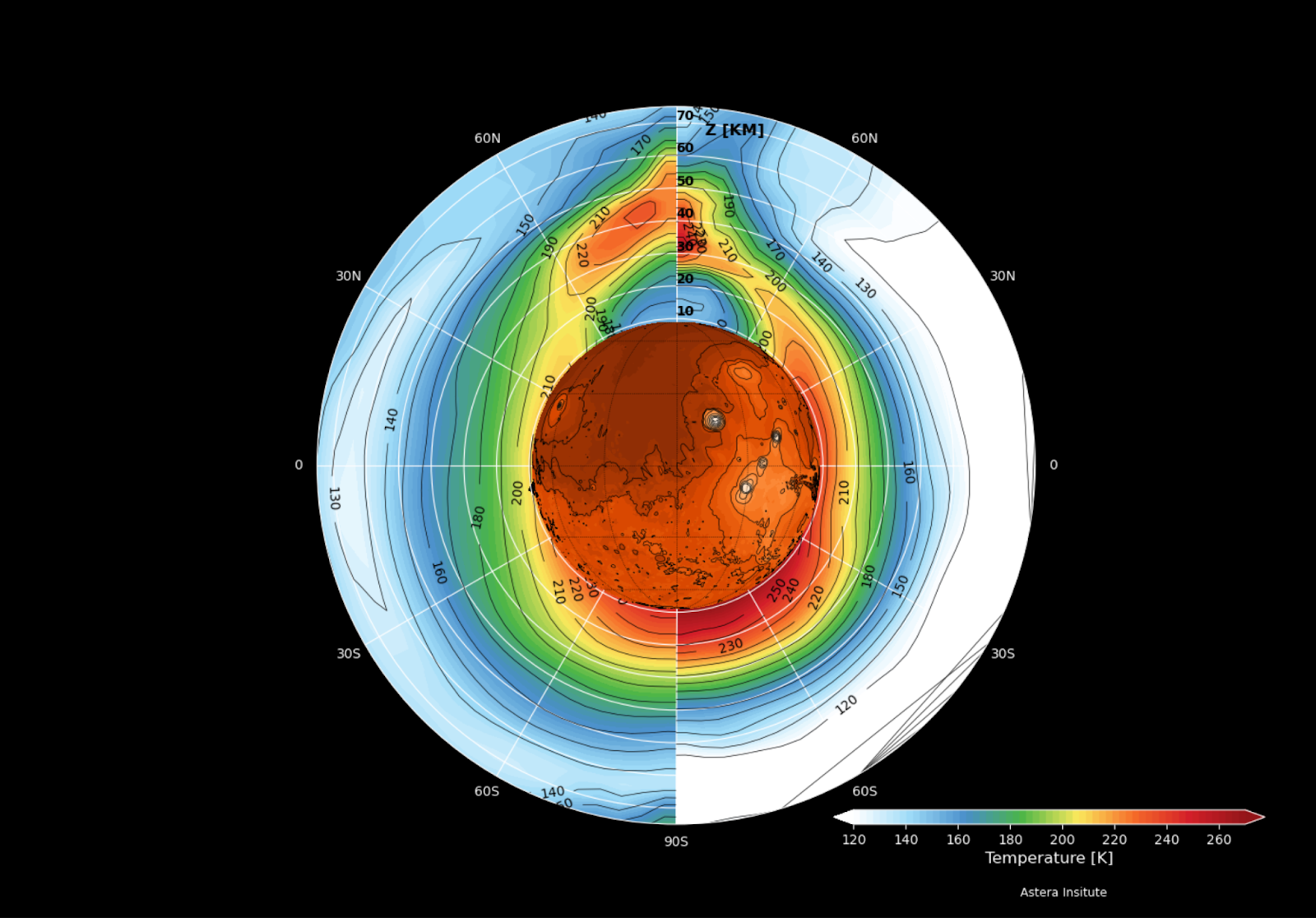

Mars is too cold for stable liquid water — the average temperature is around 210 K (about -60º C), and atmospheric pressure is only ~6 millibars. Warming the planet by 30 – 50°C could melt near-surface ice, enabling surface habitability and photosynthesis. There are lots of ways to warm Mars, including greenhouse gases and orbiting mirrors. Our team at Astera is investigating a warming approach based on engineered aerosols — specifically, nanoparticles that can forward-scatter sunlight and block thermal infrared. Compared to greenhouse gases, they’re four orders of magnitude more mass-efficient. That kind of efficiency matters — you want to get the biggest radiative payoff. If we want to make progress in this century, we need to use the materials that are already readily available on Mars, rather than shipping them in.

Along with the simulation work and delivery prototyping that we’re doing at Astera, we are working with collaborators at Northwestern University to batch-manufacture and test the most promising particles. This work is part of an extended collaboration involving scientists from Aeolis Research, JPL, the University of Central Florida, MIT Haystack Observatory, and the University of Chicago Climate Systems Engineering Initiative, among other institutions.

What properties of aerosols lead to warming the planet, rather than cooling it?

On a clear-sky night when we can see the stars, it’s typically cooler than on a cloudy night. So clouds (a form of aerosol) act as a warm blanket. Clouds also bounce sunlight back to space (cooling effect). For any aerosol, the net effect (warming minus cooling) depends on the size, shape, and composition of the aerosol. To warm Mars, we need to choose/design a combination of size, shape, and composition that gives a strong warming effect.

We also need to pay attention to particle mass. In our recent Science Advances paper, we showed that certain nanoparticle designs can achieve the same warming effect as fluorocarbon gases (a particularly potent greenhouse gas), but with ~50,000 times less mass. That matters, because even with the improved launch economics we’re seeing, getting mass to Mars is still expensive, so we need to keep the particle factory as lightweight as possible.

How would these nanoparticles be made?

The current concept involves dispersing nanoparticles into Mars’ atmosphere, where they remain aloft for long periods. These particles — which could include graphene disks or metal ribbons or even natural salts — selectively scatter shortwave solar radiation while blocking outgoing infrared. This alters the radiative balance, raising surface temperatures.

We’re exploring multiple production pathways. For example, it may be possible to fabricate aerosols using materials from Mars regolith, or using Mars’ CO2-rich air as the feedstock for making graphene disks as a byproduct of oxygen production. For a solar-powered graphene production, the basic ingredients for warming Mars could be Mars’ air and sunlight.

Particles also have another advantage: they start working within months and stop working when removed. That makes the system controllable and potentially reversible. So we could switch off or adjust a well-designed intervention as warming proceeds.

We hear your team has already made advances on researching particles 👀

Yes! One of our researchers, Alex Kling, developed an open-source screening tool to assess the warming efficiency of different particle types. It’s motivated in part by a conversation at the Tenth Mars conference, where Mars climate researchers agreed we needed something like this for natural aerosols as well. And I hope it will also be used by exoplanet researchers. Natural aerosols can be really important in extending the habitable zone, in both warming and cooling directions (for example, the type of high altitude organic haze found on Saturn’s moon Titan can be really effective in cooling a world’s surface).

Our tool is already available on GitHub, and it’s forming the basis for our next set of experiments. You can find it here:

We’re also going to batch-manufacture and test particle interactions and production protocols — essentially, answer questions like, how hard is it to make these materials from scratch? What kinds of impurities or degradation modes do we need to model? And we’ll continue sharing our results openly through preprints, on Zenodo, and on Substack.

Why is it important to do this work as open science?

Making all our findings public is necessary for informing a spacefarer’s consensus about what to do with those findings. The Outer Space Treaty says, “The exploration and use of outer space […] shall be the province of all humankind,” and every country has signed up to that.

Because we’re starting a new field, we have to work in a way that others can plug into. We have to seed open standards, protocols, and cultural momentum now, to create infrastructure that enables many organizations to work on terraforming in parallel. We’re building tools, datasets, and protocols that others can test, benchmark against, and improve. That includes publishing aerosol designs, experimental methods, and model inter-comparison frameworks — both the successes and the failures. We need to create a foundation that the whole research community can build on. And culturally, this aligns with NASA’s approach — raw rover images are public as soon as they arrive — and it’s a model that’s worked well for decades.

How do you think about the ethical questions that arise from a proposal to terraform Mars?

Perhaps Mars should remain untouched, as a planet-sized wilderness park that people bypass on our way to the stars? Or we could see Mars as an environmental restoration problem: undoing the collapse of a once-habitable planet?

Personally, I think a night sky full of living worlds is better than one that’s dead. Mars is Galactic gardening for beginners. If Mars is lifeless, then there’s no ecosystem to protect, and creating a new one might be the most meaningful thing we could do with it. But more work is needed to search for life on Mars, and decisions about Mars’ future should be made within a democratic framework. Any serious terraforming effort would take decades, if not centuries. On those long timescales, our politics and our worldviews will change. What won’t change are the basic scientific constraints, and it’s the science that we propose to study.

How can people learn more?

You can read the essay with Robin Wordsworth or check out our latest technical study. And keep an eye out for our forthcoming technical Substack!